How global traffic routing works: Anycast, GSLB, and DNS

When you type a URL, falf a second later a webpage loads. Sounds simple. But if you're in Singapore and the company behind that website has servers in Virginia, Ireland, and Singapore - something had to decide to send you to Singapore and not Virginia. That decision happens before your request even reaches a server. It happens before a load balancer sees it.

I had a vague understanding of how this worked for a while but never sat down and really traced through it. So here's me doing that.

The problem

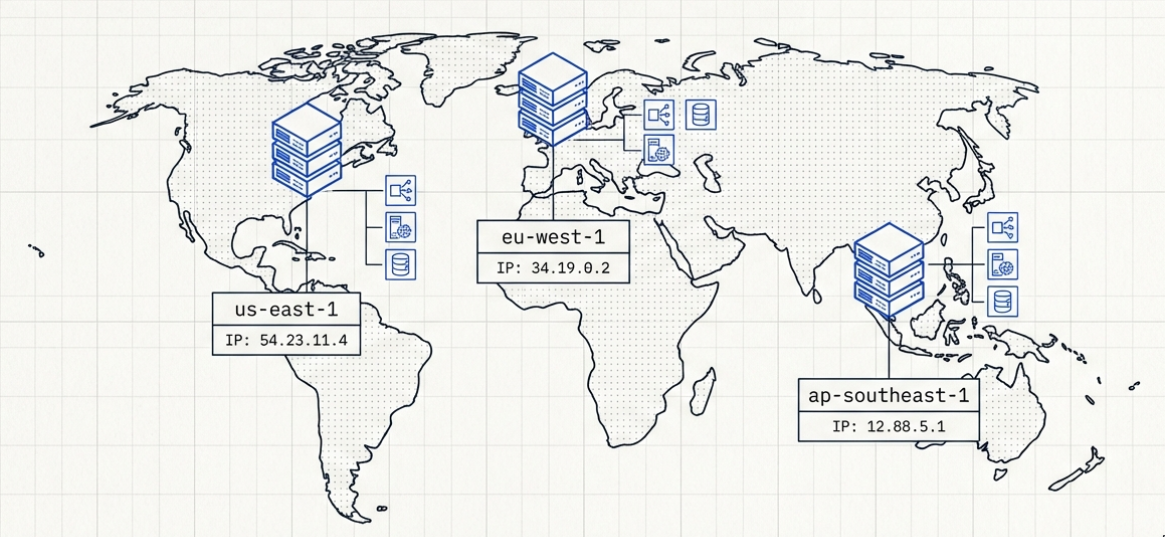

Let's say you're running a product called example.com. You've grown to the point where you have servers in three regions - us-east-1 in Virginia, eu-west-1 in Ireland, and

ap-southeast-1 in Singapore. Each region has its own infrastructure, its own load balancer, its own database replica. Fully independent.

Now a user in Singapore visits example.com. Their browser needs an IP address to connect to. But which one? The Virginia ALB? The Ireland ALB? The Singapore ALB?

If you do nothing special, DNS just returns a fixed IP - or maybe a set of IPs in a round-robin with no awareness of where the user actually is. Your Singapore users are making round trips to Virginia, taking 200ms+ of latency purely from geography, while ymur Singapore servers sit mostly idle.

You need something smarter. Something that looks at where the request is coming from and routes it to the right place. There are two main ways to solve this, and they look similar from the outside but work completely differently.

DNS-based routing (GSLB)

The first approach works at the DNS layer. You'll sometimes see this called GSLB - Global Server Load Balancing - which is an older enterprise term for what is now basically a feature in Route 53 or Cloudflare DNS.

The idea is pretty simple. Normal DNS is static - it returns the same IP for a domain name regardless of who's asking. DNS-based routing puts logic inside the DNS server so it can decide what IP to return based on who's asking.

When your browser resolves example.com, instead of looking up a static record, the DNS server checks where the request is coming from, evaluates the health and the latency of

each region, and returns the IP that makes the most sense for that user. Someone in Singapore gets the Singapore ALB's IP. Someone in London gets the Ireland.

The DNS server doesn't actually see your IP directly. It sees the IP of your DNS resolver, the server making the query on your

behalf. So if you're in Singapore using Google's 8.8.8.8. Route 53 sees Google's resolver, not you. There's a standard called EDNS Client Subnet that partially fixes this by

forwarding your actual subnet along with the query.

Route 53 also runs continuous health checks against your endpoints. If your Singapore region goes down and the ALB stops responding, Route 53 pulls it from DNS responses.

New lookups for example.com now return Ireland or Virginia instead.

The TTL problem

DNS-based routing has one fundamental weakness though, and it's caching.

When Route 53 returns an IP, that response gets cached - by the client, by every resolver in the chain - for the duration of the TTL you set. Usually somewhere between 60 and 300 seconds.

So when your Singapore region goes down and Route 53 starts returning different IPs, everyone who already cached the old answer keeps hammering the dead Singapore endpoint until their cache expires. Failover is never instant.

You can set a really short TTL to minimize it, but then resolvers re-query more often which adds latency to the first requests.

Anycast

The second approach works at a completely different layer. Instead of DNS deciding where to send you, the network itself figures it out.

Normally an IP address belongs to one specific machine in one specific place - that's called Unicast. You connect to 54.23.11.4, your packets go to exactly one

server somewhere in the world, end of story.

With Anycast, the same IP address gets announced from multiple physical locations at the same time using BGP - the protocol that internet routers use to figure out how to move packets around the globe. Every router on the internet sees multiple paths to that IP and just picks the shortest one.

Cloudflare does this with 1.1.1.1. They announce it from Singapore, London, New York, and 300+ other locations simultaneously. If you're in Singapore and send a packet to 1.1.1.1,

BGP routes you to Singapore because that's the closest path. If you're in London, you land in London. Same IP, different physical destination, and the decision is made entirely

by network topology.

You still need DNS to resolve a domain name to that Anycast IP in the first place - but once you have the IP, the routing decision is handled by BGP, not DNS.

How Anycast handles failover

When an Anycast location goes down, you withdraw the BGP announcement from the location. Routers just stop sending traffic there and route to the next closest location instead. No TTL to wait out, no cache to expire.

On well-peered networks like Cloudflare's, this can happen in seconds. On the broader public internet, BGP convergence is messier - sometimes 30 to 90 seconds, occasionally longer in bad cases.

Anycast and DDoS

If someone attacks a regular Unicast IP, all that traffic floods into one datacenter. One location has to eat the entire attack. With Anycast, attack traffic naturally spreads across your locations because BGP routes each attacker's packets to their nearest node. Attackers in Singapore flood your Singapore node. Attackers in Germany flood Frankfurt. For big distributed volumetric attacks, the load gets split globally instead of a single place.

This is fundamentally different from DNS-based distribution. With GeoDNS, each server has its own IP - an attacker can target one directly and overwhelm it. With Anycast, there's only one IP. The attacker can't choose which location to hit. The internet's routing decides where each packet goes, so the attack is spread whether the attacker wants it to be or not.

It's not the perfect thing - a botnet concentrated in one region can still overwhelm a single node - but it's meaningful resilience.

Where Anycast falls short

BGP only understands network topology. It doesn't know anything about legal jurisdiction or business rules.

GDPR is the clearest example. GDPR puts strict requirements on where and how EU personal data can be processed, and keeping that data within the EU is often the simplest path to compliance. With pure Anycast, you can't enforce that - BGP just sends traffic wherever is topologically closest.

Cloudflare actually built a whole product - Data Localization Suite* to solve this on top of their Anycast network. It lets you pin where TLS termination and traffic inspection happen for EU users. It works, but it's basically a layer of policy sitting on top of Anycast to compensate for something BGP can't express.

Most use both

A typical setup actually layers them together. Anycast (through something like Cloudflare) gets users off the public internet and onto a fast edge network as quickly as possible. If there's a CDN cache hit, great. If not, DNS-based routing picks the right origin region and forwards the request there.